Loading and saving cache from ephemeral CI runners via the network can take a considerable amount of time, negating

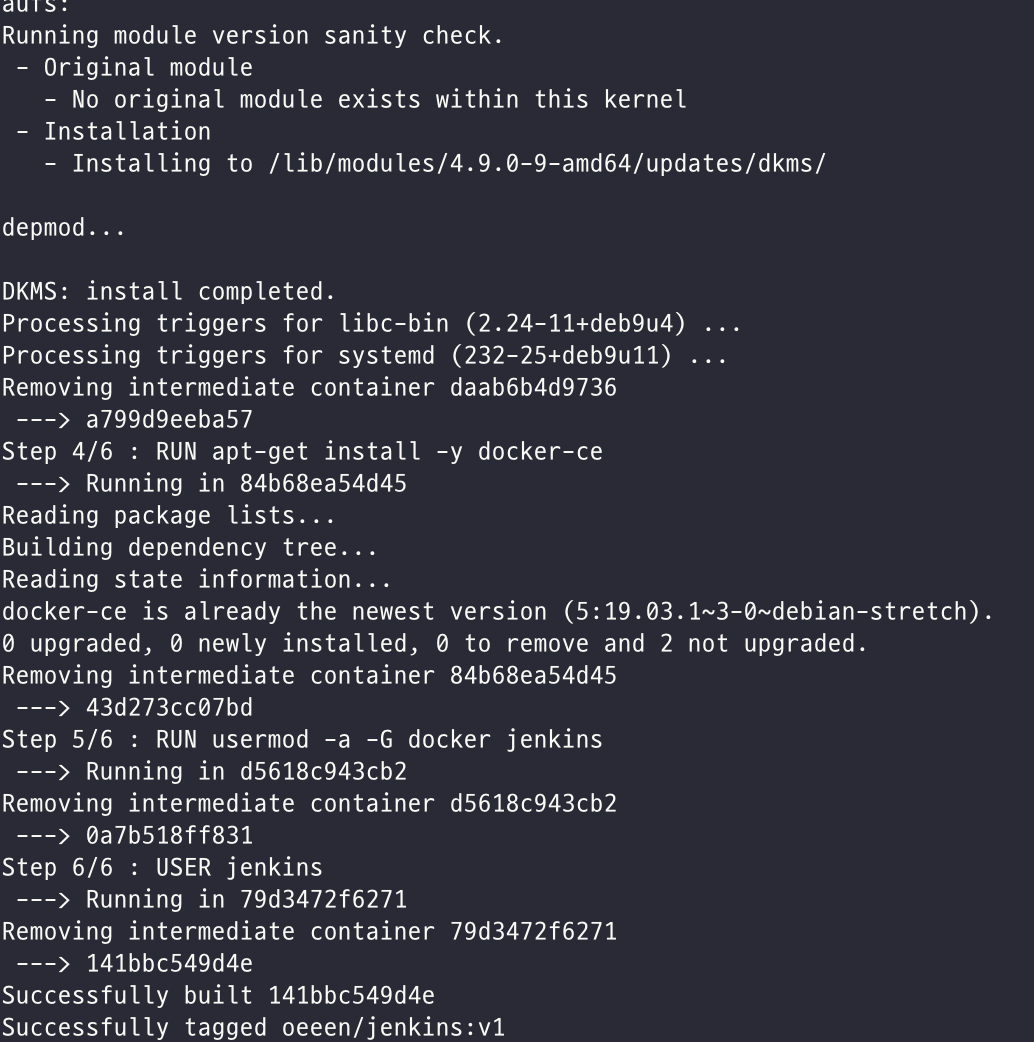

Thus, if you're building large images with large layers, you will likely exhaust it.Įven if you are building very small images and only keep essential layers in the Docker cache on each CI runner, your builds will likely not use the cache and thus be quite slow, as computing each layer on every build can take a while. If there is disk space, it's usually capped at 10 to 15 GB. Saving and loading the cache is therefore slow because the network transfer speed is slow.īy default, all CI runners are ephemeral unless you run your own. In a CI environment with ephemeral runners, such as GitHub Actions or GitLab CI, build cache isn't persisted across builds without saving/loading it over the network to somewhere off of the ephemeral runner. However, your builds might not be fully taking advantage of the Docker build cache for the following structural reasons: If you are building Docker images in a CI environment, you can, of course, use the above commands as well. We can omit the -f flag here and in subsequent examples to get a confirmation prompt before artifacts are removed.ĭocker system prune -volumes -a -f Managing Docker build cache in CI This command will remove all stopped containers from the system. We can use the docker container prune command to clear the disk space used by containers. Removing containers from the Docker cache For CI, clearing the layers might affect performance, so it's better not to do it. It's generally quite safe to remove unused Docker images and layers - unless you are building in CI. What space can we claim back without affecting Docker build performance? This comes to about 50 GB of space in total, and a large chunk of it is reclaimable. We can use the docker system df command to get a breakdown of how much disk space is being taken up by various artifacts.ĭocker system df TYPE TOTAL ACTIVE SIZE RECLAIMABLE Images 138 34 36.18GB 34.15GB (94%) Containers 74 18 834.8kB 834.6kB (99%) Local Volumes 118 6 15.31GB 15.14GB (98%) Build Cache 245 0 1.13GB 1.13GBĭocker uses 36.18 GB for images, 834.8 kB for containers, 15.31 GB for local volumes, and 1.13 GB for the Docker build cache. The first step is knowing the disk usage of Docker.

One of the main factors that affects how many of the layers in your image need to be rebuilt is the ordering of operations in your Dockerfile. So, generally, you want as much of your Docker build as possible to come from the cache and to only rebuild layers that have changed since the last build. Using a cached layer is much faster than recomputing an instruction from scratch. For layers where the hash of inputs has changed, the layers get The Docker layers for which the hash of the inputs (such as source code files on disk or the parent layer) haven'tĬhanged get loaded from the cache and reused. Otherwise, Docker will rebuild that layer and all layers that follow it. If previous layers, as well as any inputs to an instruction, haven't changed, and the instruction has already been run and cached previously, Docker will use the cached layer for it. Each instruction in a Dockerfile is associated with a layer that contains the changes caused by executing that instruction. Try it out → A short refresher on Docker cachingĭocker uses layer caching to reuse previously computed build results. Depot launches native BuildKit cloud builders for both Intel and Arm - if you'd rather not manage artifacts likeĭocker layer cache, you can use Depot's builders for your image builds and get instant shared caching on every build

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed